Cognata

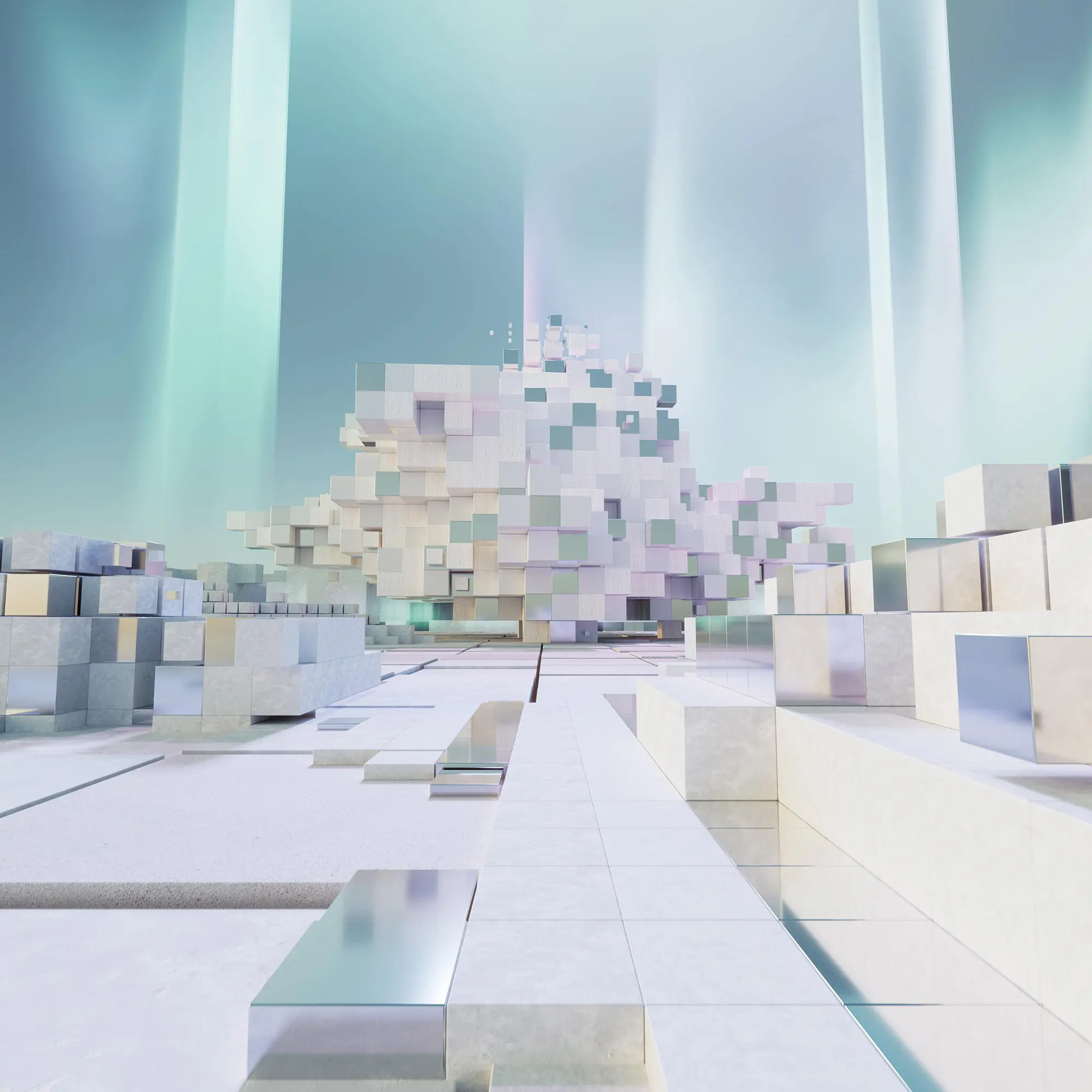

The MLCommons Cognata dataset is a set of photorealistic synthetic automotive data frames of urban and highway scenarios in several cities and different weather conditions and times of day. It consists of data licensed for use by MLCommons Members.