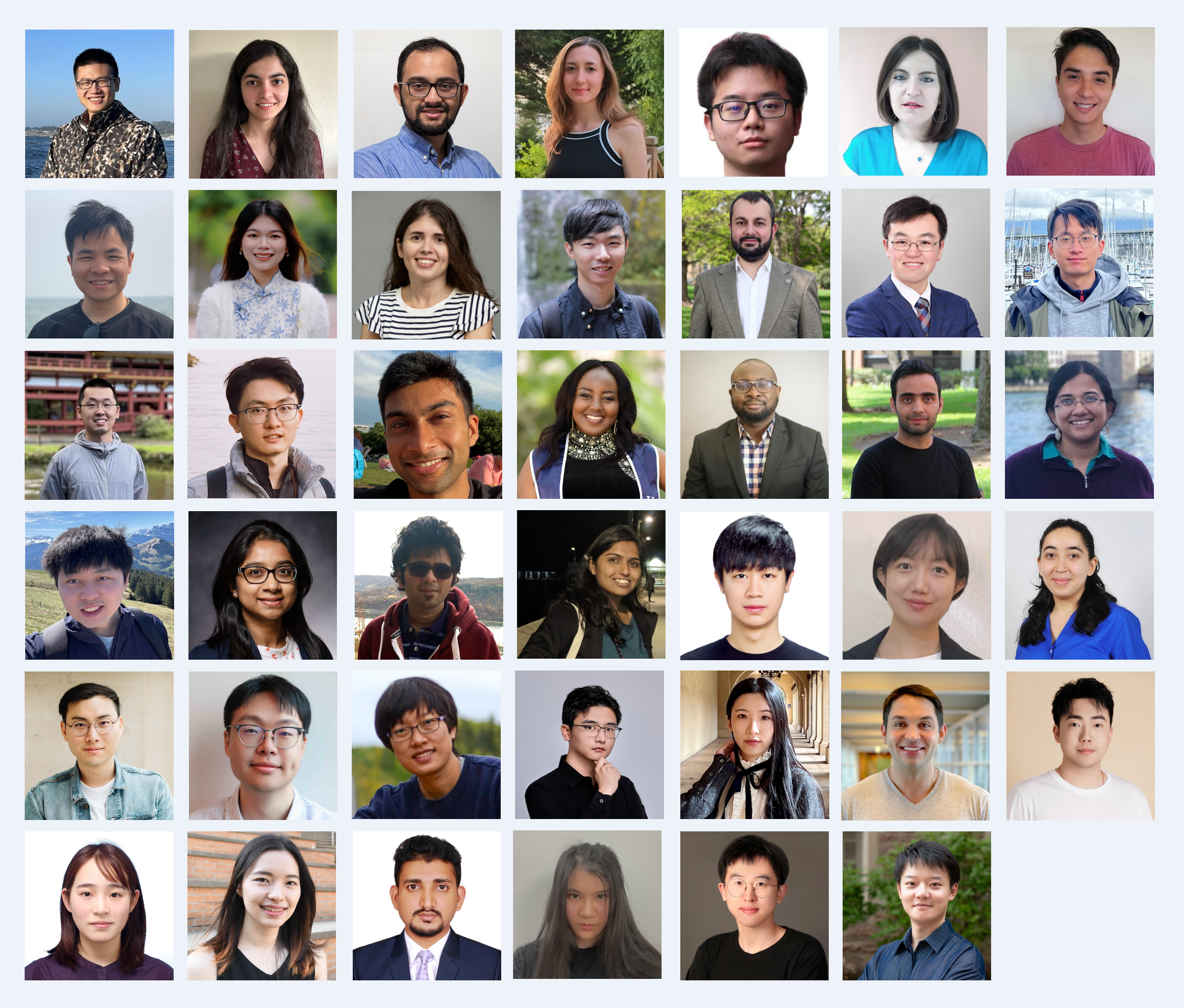

We are pleased to announce the second annual MLCommons Rising Stars cohort of 41 junior researchers from 33 institutions globally! These promising researchers, drawn from over 170 applicants, have demonstrated excellence in Machine Learning (ML) and Systems research and stand out for their current and future contributions and potential. This year’s program has been expanded to include data systems research as an area of interest.

The MLCommons Rising Stars program provides a platform for talented young researchers working at the intersection of ML and systems to build connections with a vibrant research community, engage with industry and academic experts, and develop their skills. The program continues to promote diversity in the research community by seeking researchers from historically underrepresented backgrounds. We are pleased to welcome nine international Rising Stars to this year’s cohort.

As part of our commitment to fostering the growth of our Rising Stars, we are organizing a Rising Stars workshop at the NVIDIA Headquarters in Santa Clara, CA, in July, where the cohort will showcase their work, explore research opportunities, gain new skill sets via career building sessions, and have the opportunity to network with researchers across academia and industry.

“ML is a fast-growing field with rapid adoption across all industries, and we believe that the biggest breakthroughs are yet to come. By nurturing and supporting the next generation of researchers, both domestically and globally, we aim to foster an inclusive environment where these individuals can make groundbreaking contributions that will shape the future of ML and systems research. The Rising Stars program is our investment in the future, and we are excited to see the innovative ideas and solutions that these talented researchers will bring to the table.,” said Vijay Janapa Reddi, MLCommons VP and Research Chair and steering committee member of the Rising Stars program.

We extend our warmest congratulations to this year’s Rising Stars and express our gratitude to everyone who applied.

We would also like to thank Rising Stars organizers Udit Gupta (Cornell Tech), Abdulrahman Mahmoud (Harvard), Lillian Pentecost (Amherst College), Akanksha Atrey (Nokia Bell Labs), and the rest of the organizing and program committee for all their efforts in putting together the program and selecting an impressive cohort of recipients. Two organizing committee members, Sercan Aygun (University of Louisiana at Lafayette) and Husnain Mubarik (AMD), will also steer the outreach and engagement activities for the cohort beyond the workshop.

Finally, we also want to extend our appreciation to Kelly Berschauer (MLCommons), Ritika Borkar (NVIDIA), and Azalia Mirhoseini (Stanford) for their support in putting together the program and workshop.