Featured Articles

New MLPerf Training Benchmark Results Highlight Hardware and Software Innovations in AI Systems

Two new benchmarks added – highlighting language model fine-tuning and classification for graph data

Announcing MLCommons AI Safety v0.5 Proof of Concept

Achieving a major milestone towards standard benchmarks for evaluating AI Safety

The AI Safety Ecosystem Needs Standard Benchmarks

IEEE Spectrum contributed blog excerpt, authored by the MLCommons AI Safety working group

Blog

-

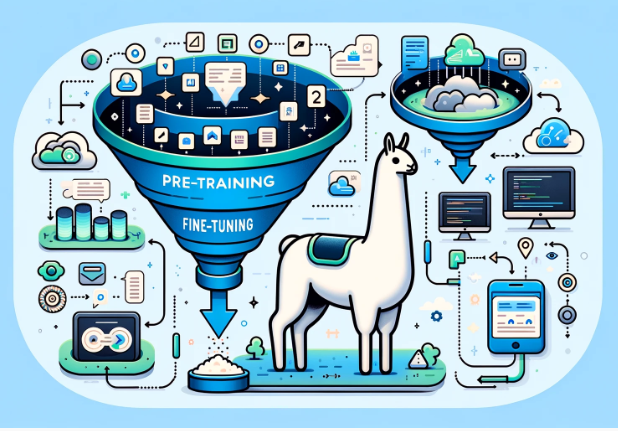

LoRA selected as the fine-tuning technique added to MLPerf Training v4.0

MLPerf Training task force shares insights on the selection process for a new fine-tuning benchmark

-

Introducing the MLPerf Training Benchmark for Graph Neural Networks

Continued evolution to keep pace with advancements in AI

-

Creating a comprehensive Test Specification Schema for AI Safety

Helping to systematically document the creation, implementation, and execution of AI safety tests

News

-

MLCommons Leadership Evolution

New leadership structure reflects our growing role in the global AI ecosystem

-

MLCommons AI Safety prompt generation expression of interest. Submissions are now open!

MLCommons is looking for prompt generation suppliers for its v1.0 AI Safety Benchmark Suite. This will be for a paid opportunity.

-

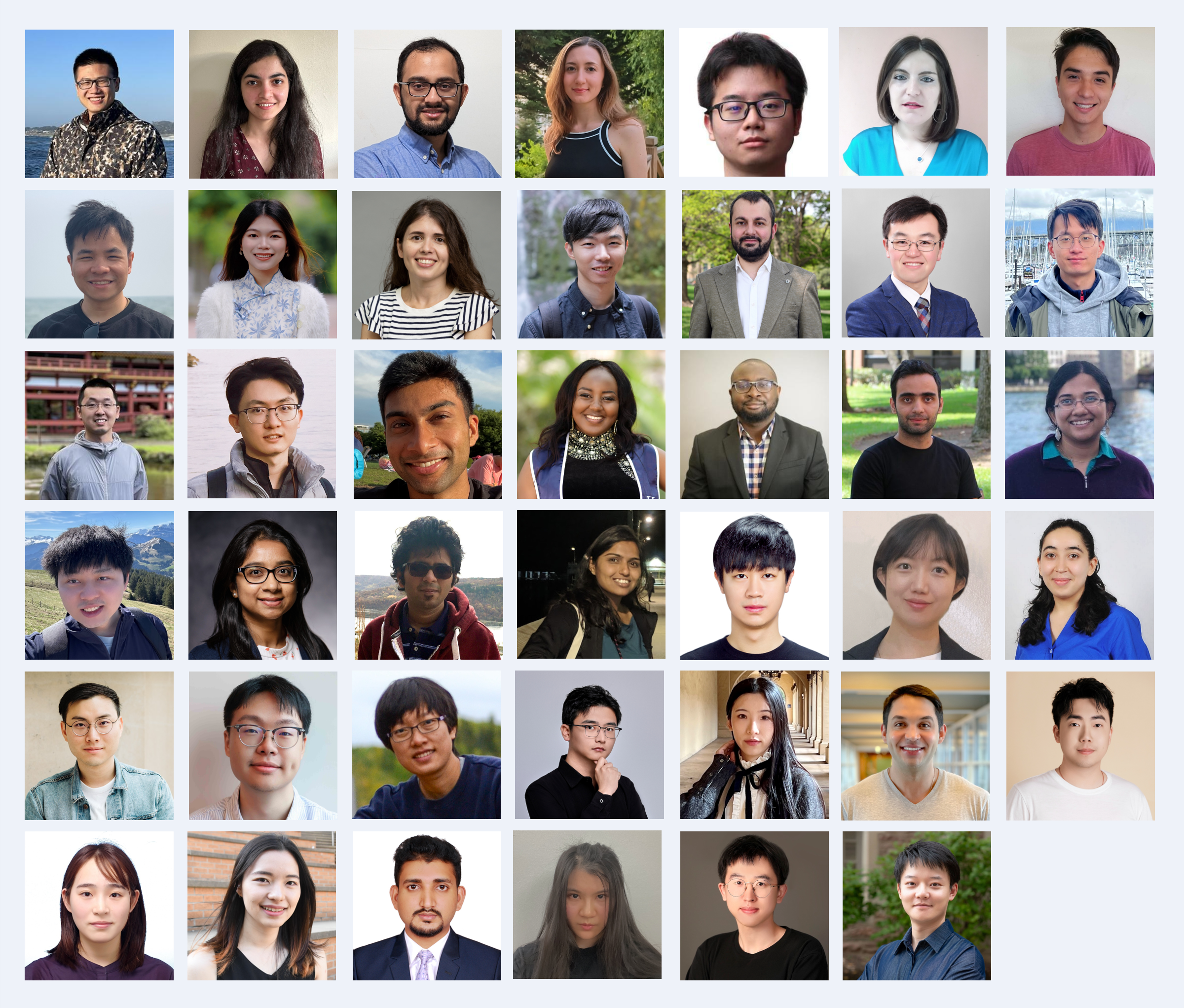

Introducing the 2024 MLCommons Rising Stars

Fostering a community of talented young researchers at the intersection of ML and systems research