Featured Articles

MLPerf Tiny v1.2 Results

MLPerf Tiny results demonstrate an increased industry adoption of AI through software support

Announcing MLCommons AI Safety v0.5 Proof of Concept

Achieving a major milestone towards standard benchmarks for evaluating AI Safety

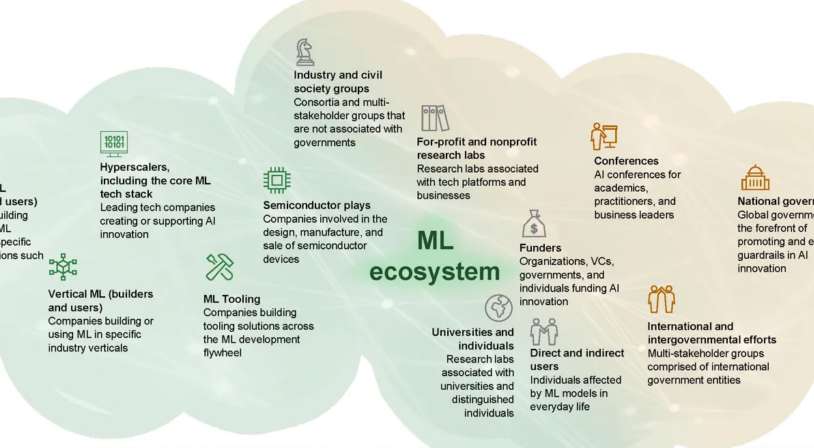

The AI Safety Ecosystem Needs Standard Benchmarks

IEEE Spectrum contributed blog excerpt, authored by the MLCommons AI Safety working group

New MLPerf Inference Benchmark Results Highlight The Rapid Growth of Generative AI Models

With 70 billion parameters, Llama 2 70B is the largest model added to the MLPerf Inference benchmark suite.

Llama 2 70B: An MLPerf Inference Benchmark for Large Language Models

MLPerf Inference task force shares insights on the selection of Llama 2 for the latest MLPerf Inference benchmark round.

Perspective: Unlocking ML requires an ecosystem approach

Factories need good roads to deliver value

Blog

-

The AI Safety Ecosystem Needs Standard Benchmarks

IEEE Spectrum contributed blog excerpt, authored by the MLCommons AI Safety working group

-

Our comments to the NTIA on Open Foundation models

Open Foundations models play an important role in developing AI safety benchmarks.

-

Llama 2 70B: An MLPerf Inference Benchmark for Large Language Models

MLPerf Inference task force shares insights on the selection of Llama 2 for the latest MLPerf Inference benchmark round.

News

-

MLPerf Tiny v1.2 Results

MLPerf Tiny results demonstrate an increased industry adoption of AI through software support

-

Announcing MLCommons AI Safety v0.5 Proof of Concept

Achieving a major milestone towards standard benchmarks for evaluating AI Safety

-

New MLPerf Inference Benchmark Results Highlight The Rapid Growth of Generative AI Models

With 70 billion parameters, Llama 2 70B is the largest model added to the MLPerf Inference benchmark suite.