Medical Artificial Intelligence (AI) has tremendous potential to advance healthcare and improve the lives of everyone across the world, but successful clinical translation requires evaluating the performance of AI models on large and diverse real-world datasets. MLCommons®, an open global engineering consortium dedicated to making machine learning better for everyone, today announces a major milestone towards addressing this challenge with the publication of Federated Benchmarking of Medical Artificial Intelligence with MedPerf in the Nature Machine Intelligence journal.

MedPerf is an open benchmarking platform that efficiently evaluates AI models on diverse real world medical data and delivers clinical efficacy while prioritizing patient privacy and mitigating legal and regulatory risks. The Nature Machine Intelligence publication is the result of a two year global collaboration spearheaded by the MLCommons Medical Working Group with participation of experts from 20+ companies, 20+ academic institutions, and nine hospitals across 13 countries.

Validating Medical AI Models Across Diverse Populations

Medical AI models are usually trained with data from limited and specific clinical settings, which may lead to unintended bias with respect to specific patient populations. This lack of generalizability can reduce the real world impact of Medical AI. However, getting access to train models on larger diverse datasets is difficult because data owners are constrained by privacy, legal, and regulatory risks. MedPerf improves medical AI by making data across the world easily and safely accessible to AI researchers, which reduces bias and improves generalizability and clinical impact. “Our goal is to use benchmarking as a tool to enhance Medical AI,” said Dr. Alex Karargyris, MLCommons Medical co-chair. “Neutral and scientific testing of models on large and diverse datasets can improve effectiveness, reduce bias, build public trust, and support regulatory compliance.”

Federated Evaluation Enables Validation of AI While Ensuring Data Privacy

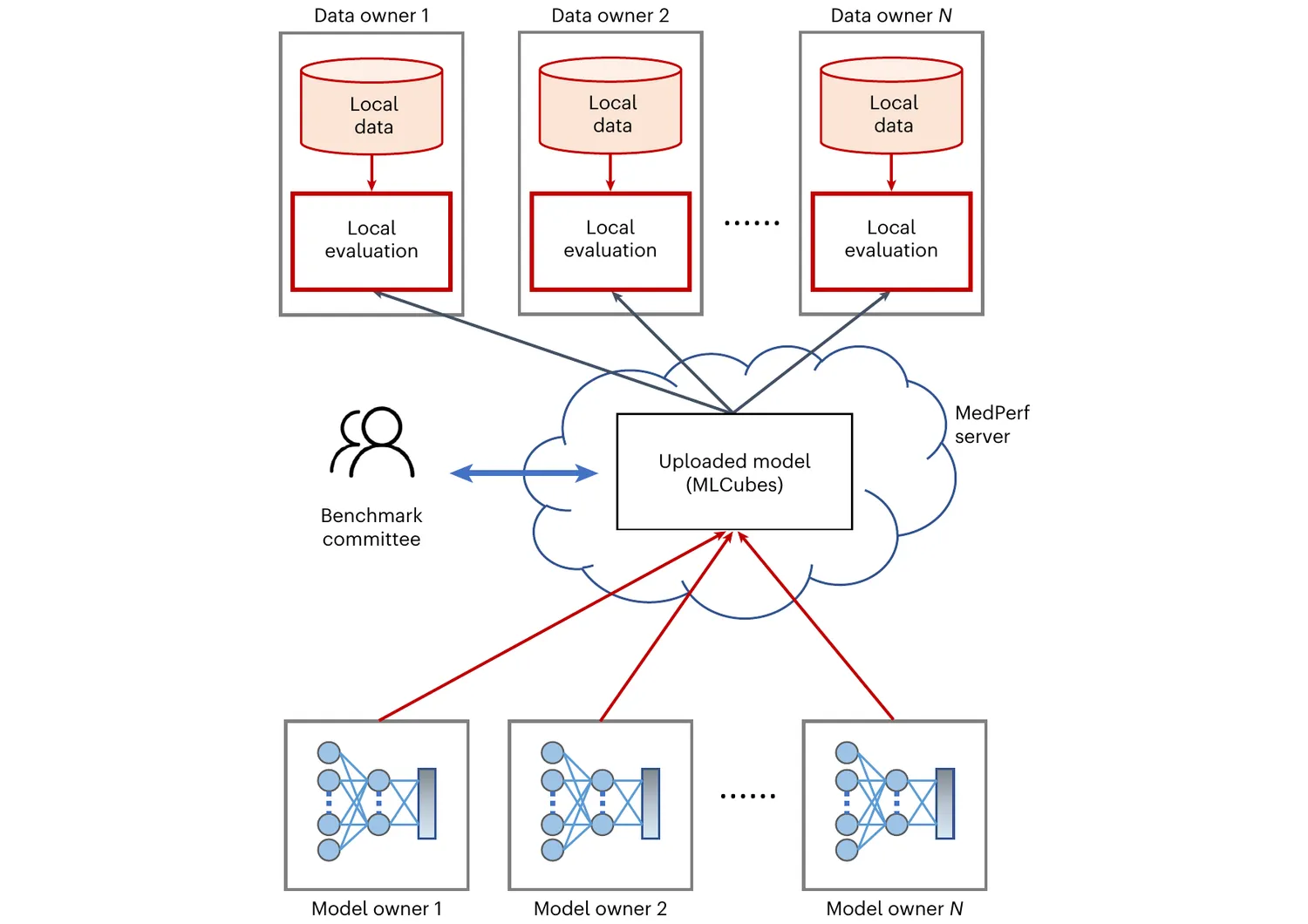

Critically, MedPerf enables healthcare organizations to assess and validate AI models in an efficient and human-supervised process without accessing patient data. The platform’s design relies on federated evaluation in which medical AI models are remotely deployed and evaluated within the premises of data providers. This approach alleviates data privacy concerns and builds trust among healthcare stakeholders, leading to more effective collaboration.

Intel Labs’ Research Scientist and MedPerf technical project lead Micah Sheller said, “Transparency lies at the core of the MedPerf security and privacy design. Information security officers must know about every bit of information they are being asked to share and with whom it will be shared. This requirement makes an open-source, non-profit consortium like MLCommons the right place to build MedPerf.”

Federated evaluation on MedPerf. Machine learning models are distributed to data owners for local evaluation on their premises without the need or requirement to extract their data to a central location.

MedPerf’s Orchestration Capabilities Streamline Research from Months to Hours

MedPerf’s orchestration and workflow automation capabilities can significantly accelerate federated learning studies. “With MedPerf’s orchestration capabilities we can evaluate multiple AI models through the same collaborators in hours instead of months,” explains Dr. Spyridon Bakas, Assistant Professor at the University of Pennsylvania’s Perelman School of Medicine and the vice chair for benchmarking & clinical translation for the MLCommons Medical working group.

This efficiency was demonstrated in the Federated Tumor Segmentation (FeTS) Challenge, the largest federated experiment on Glioblastoma. The FeTS Challenge spans 32 sites across 6 continents and successfully employed MedPerf to benchmark 41 different models. Thanks to active involvement by Dana-Farber, IHU Strasbourg, Intel, Nutanix, and University of Pennsylvania teams, MedPerf was also validated through a series of pilot studies representative of academic medical research. These studies involved public and private data across on-prem and cloud technology including brain tumor segmentation, pancreas segmentation, and surgical workflow phase recognition.

“It’s exciting to see the results of MedPerf’s Medical AI pilot studies, where all the models ran on hospital’s systems, leveraging pre-agreed data standards, without sharing any data,” said Dr. Renato Umeton, Director of AI Operations and Data Science Services in the Informatics & Analytics department of Dana-Farber Cancer Institute and Co-Chair of the MLCommons Medical Working Group. “The results reinforce that benchmarks through federated evaluation are a step in the right direction toward more inclusive AI-enabled medicine.”

MedPerf partnered with Sage Bionetworks to build ad-hoc components required for the FeTS 2022 and the BraTS 2023 challenges atop the Synapse platform serving 30+ hospitals around the world, as well as with Hugging Face to leverage its Hub platform and demonstrate how new benchmarks can utilize the HF infrastructure. To enable wider adoption, MedPerf supports popular ML libraries that offer ease of use, flexibility, and performance – e.g., fast.ai. It also supports private AI models or AI models available only through API, such as Microsoft Azure OpenAI Services, Epic Cognitive Computing, and HF inference points.

Extending MedPerf to Other Biomedical Tasks

While initial uses of MedPerf focused on radiology, it is a flexible platform that supports any biomedical task. Through its sister project GaNDLF, which focuses on quickly and easily building ML pipelines, MedPerf can accommodate multiple tasks such as digital pathology and omics. And supporting the open community, MedPerf is developing examples for the specialized low-code libraries in computational pathology, such as PathML or SlideFlow, Spark NLP, and MONAI, to fill the data engineering gap and provide access to state-of-the-art pre-trained computer vision and natural language processing models.

A Foundation to Evaluate and Advance Medical AI

MedPerf is a foundational step towards the MLCommons Medical Working Group’s mission to develop benchmarks and best practices to accelerate Medical AI through an open, neutral, and scientific approach. The team believes that such efforts will increase trust in Medical AI, accelerate ML adoption in clinical settings, and ultimately enable Medical AI to personalize patient treatment, reduce costs, and improve both healthcare provider and patient experience.

The team would like to acknowledge the publication co-authors for their valuable contributions.

Call for Participation

To continue to drive Medical AI innovation and bridge the gap between AI research and real-world clinical impact, there is a critical need for broad collaboration, reproducible, standardized, and open computation, and a passionate community that spans academia, industry, and clinical practice. We invite healthcare professionals, patient advocacy groups, AI researchers, data owners, and regulators to join the MedPerf effort.

Publication

Karargyris, A., Umeton, R., Sheller, M.J. et al. Federated benchmarking of medical artificial intelligence with MedPerf. Nat Mach Intell 5, 799–810 (2023).